Creating Chatbot

Posted by Pranjal on September 2, 2017

What we intended to do

We wanted to develop competency in creating and managing AI based chatbot that could respond to comments from users automatically. We started with our in-house project of creating chatbot for SchoolAdmissionIndia.com. SchoolAdmissionIndia.com was chosen since we have been running this platform since last many years, so we have complete knowledge of the space and in previous years we used Whatsapp to run chat based integration with users so we have some old chat data to refer to.

What we tried

We looked at multiple online platforms to create the same. We investigated Chatfuel and similar services, but at that time we found them to be really doing some very basic stuff where you needed to remodel the content on your website in chat like format. With no option for user to type up or ask any thing that they wanted to know. Next we started exploring some of the AI based platforms such as WIT.ai and API.ai. Based on initial investigation we proceeded further with WIT.ai. It did support Machine learning (ML) based "intent" identification but our exposure to fiddling with ML part was really very limited. So, the only other option we were left with was creating our own framework and building chatbot on top of that. This was a daunting task since this not only required understanding of AI/ML but it also needed understanding of Natural language processing (NLP), which in itself is a vast topic. However, we were up for it and started the process.

Architecture

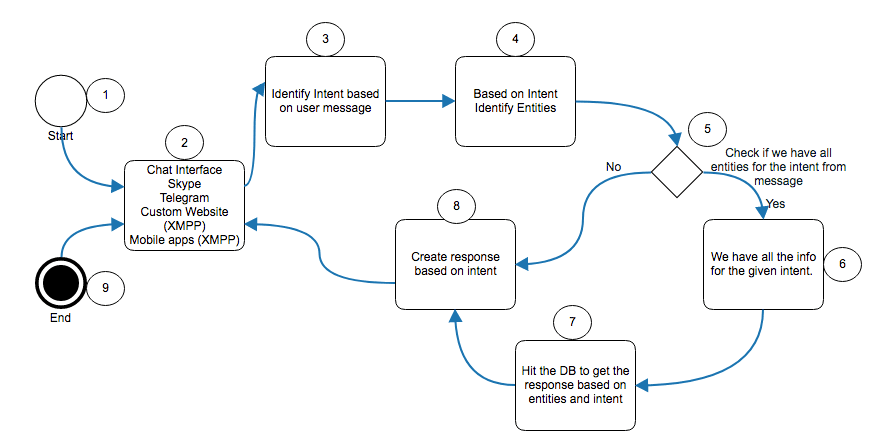

Based on systems we tried we defined the high level architecture for the chatbot. Individual elements for the bot were identified as displayed in the below flowchart.

Below items refer to figure 1 above:

1. Step 1 was to get messages from users.

2. For getting messages from user, we integrated with Skype and Telegram. So messages sent by users on any of these platform will directly be delivered to the bot system and then the bot could respond back on the same platform. However, since we wanted the same bot to be present on website as well as mobile applications for the site, we created our own implementation of Chat clients based on XMPP protocol and integrated with a XMPP based server. This XMPP server was modified to send messages to the chatbot API instead of a user on the admin interface.

3. Based on the chats from previous years, we defined "intents". Intents are basically trying to find out what the user wants from the message he has sent. For example user could say something like "Please tell me dates when admission form for DPS Noida will be out". So with this message user is actually asking for "admission form release" information. Besides the Admission process related intents we also defined some generis intents such as "Greeting", "Unknown" etc.

4. Next step was to identify entities from message once we knew the intent. For example in the message "Please tell me dates when admission form for DPS Noida will be out" user is trying to ask about "admission form release" but for that we need to know which school user wants to know about and which city that school belongs to. So parsing the above sentence we found that both these entities were there in the sentence "DPS Noida" is the school as well as "Noida" is the city. There could be many types of entities that a chatbot needs to support such as Dates, Location, Names of school, person's name etc.

5. However, it is not essential that user provides all the (entities) information in one sentence. So the bot needs to go over the entities and find out if it has all the all the required info.

6. If it had all the required entities

7. If it has all info it would hit the DB to find the answers for the given question and pass on that answer to class that creates an actual text based response.

8. In case the all the info was not provided by the user, the bot sets up a user context, with the info provided and creates a response which itself is a question to ask the missing entity. However, if all the entities were provided and we got the response data then this section would create a logical answer and send back to the user.

9. So based on input user gets the response.

Additional Notes:

Currently machine learning was employed only at step 3. Based on past years chat data we had the list of questions that users generally ask, so we were able to provide map of questions to intent and run a machine learning algo to create a model to map text to intent. If the text did not map to any intent we would map it to "Unknown" and provide appropriate response to users.

We also employed spelling correction based on levenshtein distance algorithm. So basically before passing on the text entered by use on to intent detection system, we corrected the spellings as needed

Entity recognition could also use some ML algorithm but we did not have sufficient data to add the same.

While giving response at step 8, we wanted to add sentiment analysis as well but existing functions do not give very accurate sentiment determination and we are currently exploring how we can use "word embeddings" and sentence parsing to more accurately determine sentiment from the sentence

Also while giving response at step 8, the response can be dynamically generated (Generative model), however we have restricted the bot to current use retrieval based model, since generative could be a lot more complicated.

Learning in terms of ML

- We understood that chat based text is very different from normal text. In this text people use a lot of short forms such as "U" "dere" etc. So, the bot not only needs to understand that it needs to respond to that

- Not only that people use some hindi text as well as along with English so that makes it a lot difficult problem to solve

- For ML model that we started with simple bag of words model and then used naive bayes on top of that. However, that did not give use very good results.

- Then we went on to explore 2-gram 3-gram models which gave slightly better results. But nothing really great.

- Then we analyzed the chat text itself, updated our spelling correction algo, filtered out some stop words that were not adding any value to content.

- We also added very frequently occurring Hindi test to our vocab set.

- Used modified form of tf-idf algo to increase weight of text that we thought was closer to intent.

With a lot of trial and error we were finally able to increase the intent matching wo about 85% on our previous year dataset.

All in all, it was a good project to carry out. We did learn a lot about creating a chatbot. However, we also realized that it is still not easy to write a bot that could even think about replacing humans anytime soon!

Business:

Business:  Call us on:

Call us on: H 92, Ground Floor. Sector 63, Noida, U.P

H 92, Ground Floor. Sector 63, Noida, U.P